Anchors in AR FoundationĪR Foundation offers two options for anchors: Therefore, our object should be a child of that. The position is then updated in Unity through AR Session Origin. This pose is tracked by the XR device (e.g., ARCore or ARKit). This corresponds to a pose in the physical environment. Holograms should be anchored to real world objects, instead of Unity’s “virtual world” coordinates. This is where anchors come into the game.

You also need to keep the real and virtual world in sync. Placing holograms in the Unity coordinate system isn’t enough.

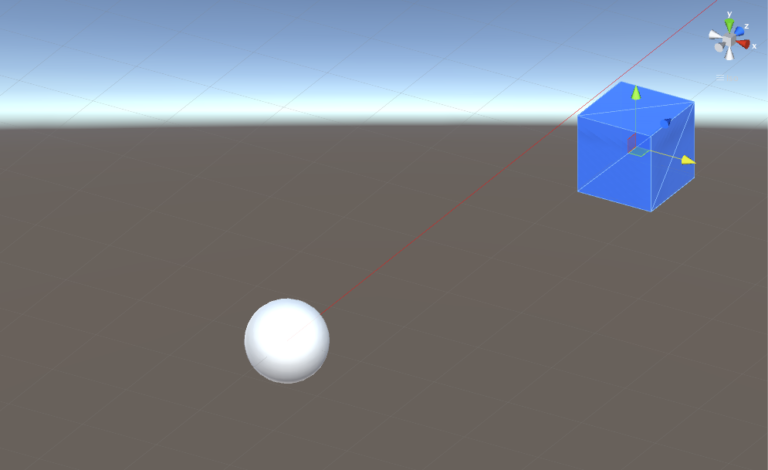

Note that we have placed the 3D model at the Unity world position but have not anchored it to the AR world yet, so that it stays in place as the phone’s knowledge of the real world evolves. To check on what kind of trackable we placed the object, a Debug.Log() statement prints out the hitType property. We can then directly forward this position information to Unity’s Instantiate() method, also providing the reference to the prefab for the 3D model to instantiate at that position. The pose property contains the position and rotation of the trackable surface that the ray hit. As they are sorted by distance, it usually makes sense to only handle the closest hit, as this is where the user will want to place the object. In case a hit was detected, our list will be filled with hit positions. You can of course limit this to what suits your scenario. In this case, it’s set to TrackableType.AllTypes, which includes both planes and feature points (and other trackables). The last parameter lets you limit with what kind of trackables you want to interact with. For parameters, only need to provide the position of the touch event (in screen coordinates) and the Hits list that will be filled with intersections of the ray and trackables. The function returns true if it hit any valid target. If not, we immediately exit the Update() function again. Simply download the unitypackage and import it to your Unity project.įirst, we check if a new touch event on the screen just began. I’ve already prepared one: the model of a transparent skull. Next, choose a 3D model to place and convert it to a prefab. Therefore, create a new script called ARPlaceHologram and attach it to ARSessionOrigin as well.

This time, there is no default prefab available for visualization. Like when using point clouds and planes, you need to add the corresponding manager script – in this case the ARRaycastManager – to the ARSessionOrigin. A persistent raycast is also a trackable through the ARRaycastManager. However, AR platforms can optimize this scenario and provide better results. Persistent raycast: it’s like performing the same raycast every frame.Single, one-time raycast: useful for example to react to a user event and to see if the user tapped on a plane to place a new object.Therefore, AR foundation comes with its own variant of raycasts. The raycast then tells you if and where this ray intersects with a trackable like a plane or a point cloud.Ī traditional raycast only considers objects present in its physics system, which isn’t the case for AR Foundation trackables. You “shoot” a ray from the position of the finger tap into the perceived AR world. If you’d like to let the user place a virtual object in relation to a physical structure in the real world, you need to perform a raycast. Accept YouTube Content AR Raycast Manager If you accept this notice, your choice will be saved and the page will refresh. By accepting you will be accessing content from YouTube, a service provided by an external third party. Please accept YouTube cookies to play this video.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed